DEDA for Media

Media organisations are under growing pressure to experiment with AI while safeguarding the values that define journalism. AI can improve efficiency, analysis, and content production, but it also raises difficult questions: how can editorial independence be preserved, how are risks to the public addressed, and how can organisations maintain trust while adopting new technologies?

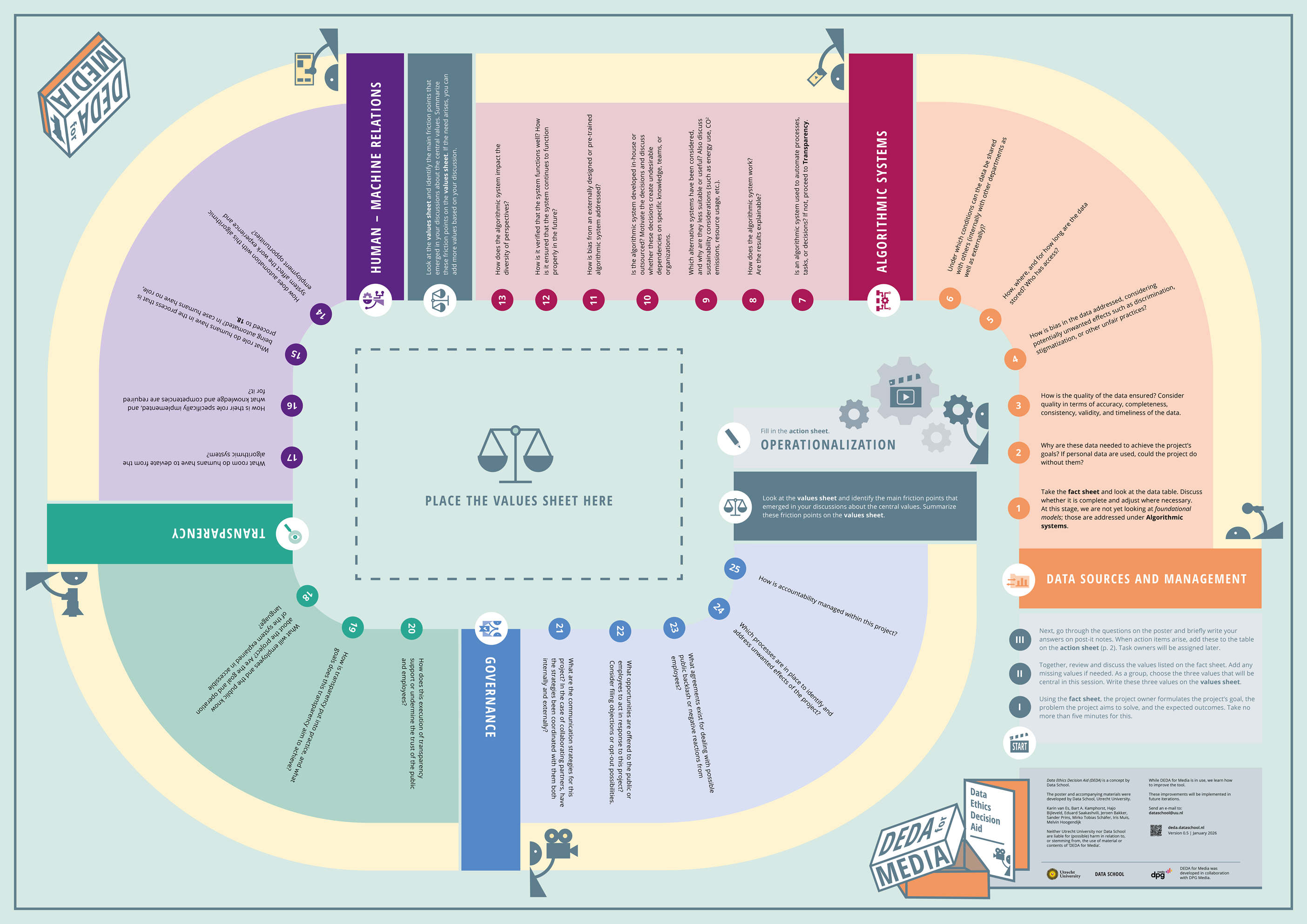

DEDA for Media (D4M) was developed to help media organisations navigate these decisions. It is a structured, dialogue-based tool that enables teams to examine ethical and societal questions in data and AI projects before systems are implemented. The materials are freely available and designed for practical use within organisations.

D4M is presented as a large-format (A0) poster that teams work through together. The process helps identify and document tensions between design choices, project goals, and journalistic values. By guiding structured reflection on the purpose of a project, the data and technologies involved, and the potential consequences for audiences, editorial staff, and the organisation, risks and blind spots can be recognised early and addressed more effectively.

The process supports better decision-making and contributes to the development of more responsible and robust AI applications. It also creates transparency around how choices are made, strengthening accountability within the organisation and building trust with partners and the public.

Working through the poster together typically results in concrete action points that help align AI and data projects with the organisation’s mission and values. In practice, D4M also strengthens digital and ethical literacy within teams, enabling organisations to approach AI adoption more confidently and responsibly.

D4M will be released in the course of 2026.